Re:Cycle

Re:Cycle is a generative ambient video art piece based on nature imagery captured in the Canadian Rocky Mountains. Ambient video is designed to play in the background of our lives. It is a moving image form that is consistent with the ubiquitous distribution of ever-larger video screens. The visual aesthetic supports a viewing stance alternative to mainstream media - one that is quieter and more contemplative - an aesthetic of calmness rather than enforced immersion. An ambient video work is therefore difficult to create - it can never require our attention, but must always rewards viewer attention when offered. A central aesthetic challenge for this form is that it must also support repeated viewing. Re:Cycle relies on a generative recombinant strategy for ongoing variability, and therefore a higher measure of re-playability. It does so through the use of two random-access databases: one database of video clips, and another of video transition effects. The piece will run indefinitely, joining clips and transitions from the two databases in randomly varied combinations.

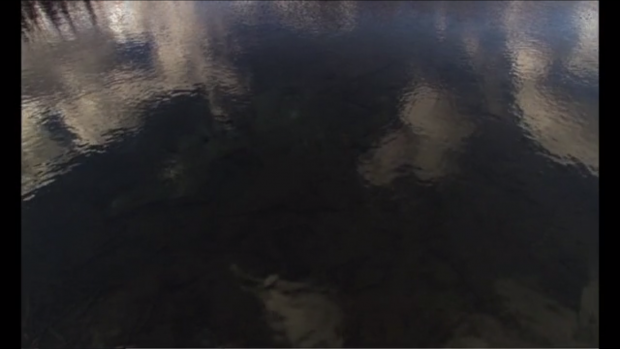

Creative input to the system derives in large part with the selection of shots that the artist uses. I've been fortunate to collaborate with a brilliant cinematographer - Glen Crawford from Canmore, Alberta. Re:Cycle's landscape images include a range of elements such as snow, trees, ice, clouds and water - reflecting a deep respect for the natural environment. These images also produce the ‘ambient’ quality I am seeking. They are engaging when viewed directly, but also move easily to the background when not. Another artist might choose very different images, and the resulting work could be completely different. While I enjoy the complete control offered with traditional linear video art, I am intrigued by the different set of artistic decisions this simple generative platform can support.

The current version of the generative engine for Re:Cycle also incorporates a deeper level of artistic intervention through the integration of metadata into the dynamics of the system. Each video clip is given one or more metadata tags - reflecting the content of the individual shot. I have used the tags to nuance the random operation of the engine, and group and present images in sequences that share a common content element (such as "snow" or "water"). The resulting generative video work presents a stronger sense of visual flow, and the sequencing begins to exhibit a degree of semantic continuity.

The overall design of the piece incorporates a series of decisions (number of shots, quality of shots, transition selection, algorithmic process) that strike a balance between replayability/variation on the one hand, and aesthetic control on the other.

Original program in MaxMSP-Jitter. Revised program in Max6

Director of Photography: Glen Crawford

Version 2 Programming: Sayeedeh Bayatpour, Tom Calvert

Original Programming: Wakiko Suzuki, Brian Quan, Majid Bagheri

Producer: Justine Bizzocchi